Testing at speed: Using Cloud Build for continuous integration with Ruby-on-Rails

How to use Cloud Build for continuous integration to test your rails applications, fast.

Cloud Build is one of those products that doesn’t get lots of the spotlight, but if used correctly, should be something developers interact with multiple times per day! In this post, I’ll discuss testing a Ruby-on-Rails (RoR) application using Docker and Docker Compose to test reliably, quickly and efficiently by re-using containers and local networks.

Ruby on rails is a popular web framework that helps developers quickly build web applications by taking an opinionated approach to dictating how web-apps are built. Testing with rails is relatively straight forward and you can leverage test runners such as Minitest or RSpec to test your application. When working with other developers and rapidly building having reliable, segregated testable pieces of your application is crucial to successful deployments and delivering functions and features. Using Docker as part of our build and Github as our source control, we can create continuous integration with cloudbuild on GCP, which will form the first step in our journey on the road to production.

Cloudbuild Configuration

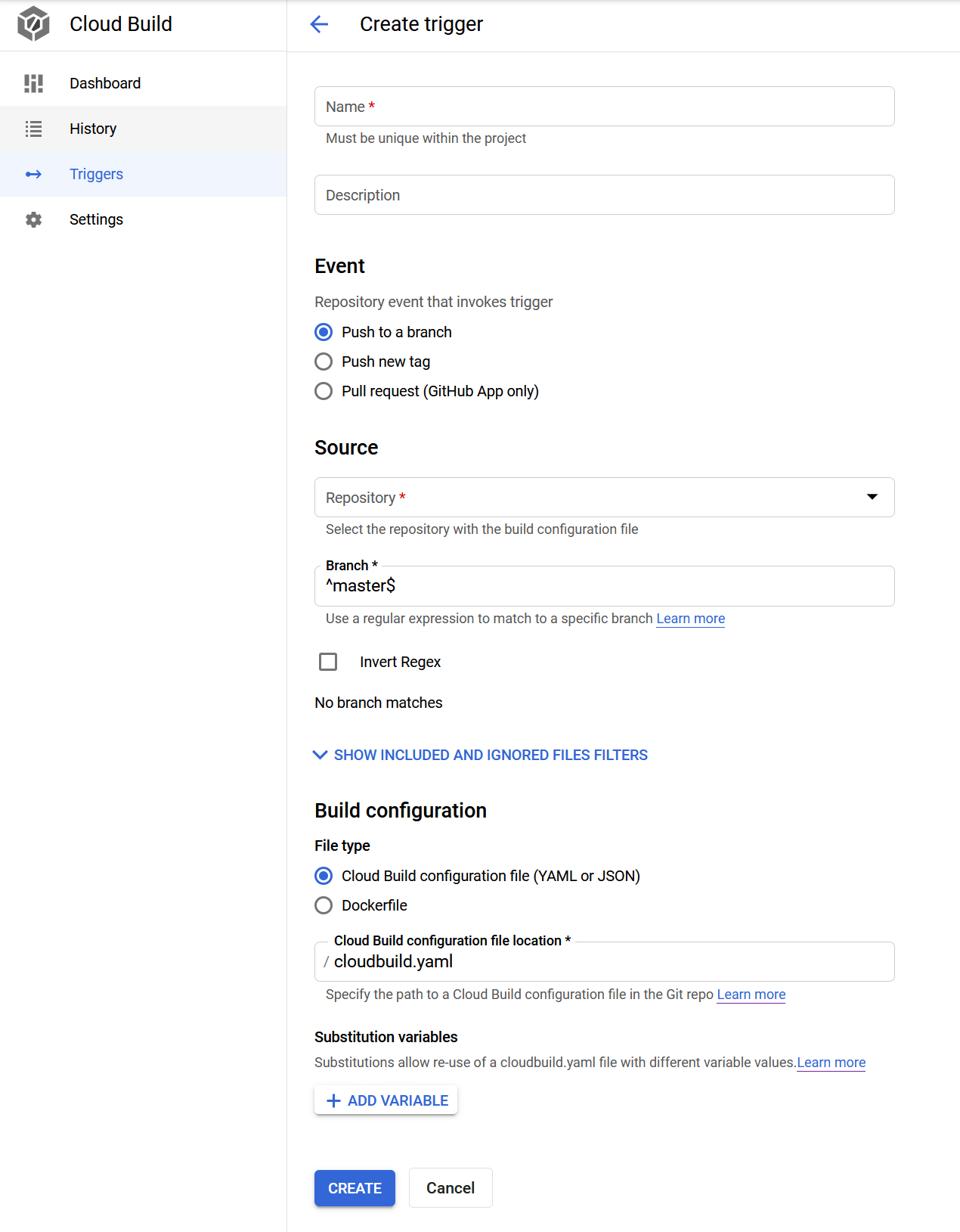

To begin, you’ll need a Github repository setup and a project setup on Google Cloud, along with the cloudbuild API enabled to facilitate the triggers which will kick off the builds. Once done, jump into the GCP console and link your project to the github repository, and then configure a trigger for cloudbuild to run. Here’s an example:

Enter a name for the trigger and an optional description, and then select an event for your trigger to fire on. In my case, I prefer to only run builds on open pull requests, and since I only have the master branch and whatever feature branch I am working on, this happens to be the master branch. You can use the pre-filled regex (^master$), or you can write your own if you have something funky you’d rather fire a build on. The last required setting is the build configuration, and in this case, we’ll specify a cloudbuild.yaml file since we want a bit more control as well as use custom builders.

Cloudbuild YAML File

Once we’ve created the trigger, the next step is to setup all the configurations for cloudbuild to be able to do what it needs to, in order to function as an effective CI tool for rails.

steps:

- name: "gcr.io/cloud-builders/docker"

entrypoint: "bash"

args:

- "-c"

- |

docker pull gcr.io/$PROJECT_ID/myapp:testing || exit 0

- name: "gcr.io/cloud-builders/docker"

args:

[

"build",

"--cache-from",

"gcr.io/$PROJECT_ID/myapp:testing",

"-t",

"gcr.io/$PROJECT_ID/myapp:testing",

".",

]

- name: "gcr.io/$PROJECT_ID/docker-compose"

args: ["--file", "docker-compose-ci.yml", "run", "rspec"]

images:

- "gcr.io/$PROJECT_ID/myapp:testing"If you’ve not seen a cloudbuild file before, let’s break it down. At the top of the file is the steps keyword which is a list of steps for your cloudbuild run to take. First step in this example is to pull is to use the docker builder and pull in from the local project repository a copy of the previously build image. If it doesn’t exist, it will ignore the failure and continue. The reason I do this is outlined in this post, which talks about using the cache for faster building of images.

The next step in the file is to build the Rails Docker image, so that we can use it for testing in the third step. You’ll notice that we use the --cache-from flag to refer to the previously built image we pulled down in the previous step. The reason we do this is mostly to speed up build times for our image. We don’t need or want to go through installing the entire gemset every time, as well as updating the local container, installing system dependencies etc. All we want to do is just test our code. By doing this cache step, we go from about a 6-7 minute build down to about 90 seconds.

Moving on. The final step in our build run is to actually run the tests. In this case you’ll notice that we aren’t using a GCP-provided builder (or cloudbuilder, I think they call them). Because we’re using docker-compose, and that’s not officially created by GCP, we’ll need to utilise one of the community-provided cloudbuilders. Jump on over to https://github.com/GoogleCloudPlatform/cloud-builders-community/tree/master/docker-compose and clone the repo. In this instance, you’ll see that in order to make this builder available in our project we need to store it in our own GCR repo in our project. Follow the instructions in the readme on that github page to get the builder in your project. Once done take a look a the final step. You’ll see we run docker-compose --file docker-compose-ci.yml run rspec as the command in cloudbuild. This is the step which runs the RSpec test runner. If you’re not using RSpec, you’ll need to adjust this in the files you’ll see below.

Cloudbuild Docker-Compose config

Let’s take a look at our docker-compose file, which we’ll be using in our cloudbuild test run.

version: "3"

services:

postgres:

image: postgres:alpine

ports:

- 5432

environment:

POSTGRES_USER: postgres

POSTGRES_PASSWORD: postgres

POSTGRES_DB: myapp_test

rspec:

# CI Testing (CloudBuild)

image: gcr.io/<YOUR PROJECT ID>/myapp:testing

volumes:

- .:/app

command: "./run-tests.sh"

depends_on:

- postgres

environment:

DB_HOST_URL: postgresWhilst I won’t go into detail about docker-compose in this post, as we’re focusing on cloudbuild, for the sake of completeness, I’ll just point out that the only dependent external service this application has is on Postgres so in order to test efficiently, we need to include it as a dependency in our docker-compose file so that we have a database to connect to when we run our tests. You’ll see that the second service listed here is rspec which is where in cloudbuild we specified we wanted our docker-compose to run rspec. So with that service selected we start the “service” by running a script, after our depends_on is satisfied. The image it uses is the one from our GCR repo, which we’ve just built in the previous step.

#!/bin/sh

bundle exec rake db:test:prepare && bundle exec rspec specThis is our run-tests.sh file. In short, it ensures our test database is ready, and then runs the rspec command against the spec directory

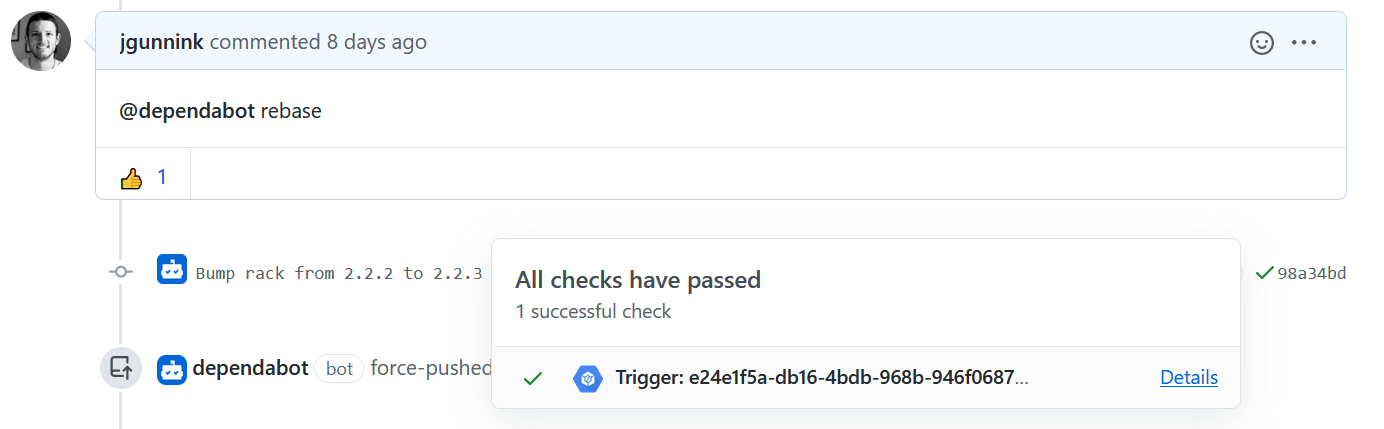

And that’s it. With a couple of files, we’ve built a robust CI tool which uses our Rails dockerfile and tests against it. Now that you’ve integrated cloudbuild into your Github account for testing, when you open up a pull-request to merge code in, the tests should automatically kick off and provide a status report back to Github letting you know the status of pass/fail for that test run. Here’s an example of what I mean:

Bonus

Once you start developing your application a bit more, and start adding in extra features, you may have a need to bring in more services. Redis is a popular service, as it works well with action cable for PubSub features as well as offloading tasks, like email so that ActiveJob can utilise other threads to send messages rather than locking up the web process. To help facilitate, you can just add Redis in a similar fashion to what we’ve done for the postgres service, and then making sure that it’s up by adding a depends_on flag to the web service.