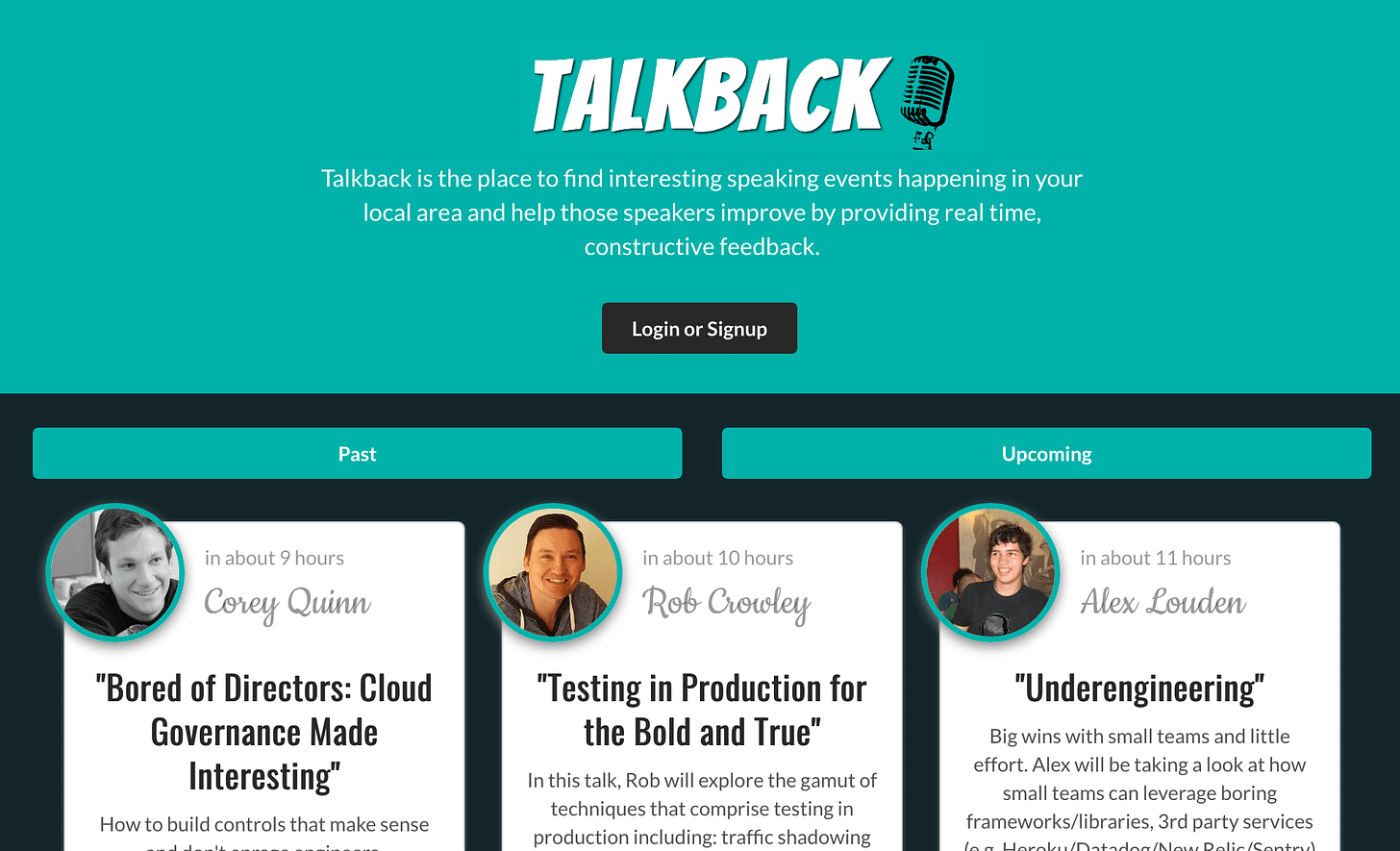

Talkback: A serverless web application built with GraphQL, Apollo and AppSync

A few weeks ago my employer, Mechanical Rock hosted a conference in Perth, Western Australia talking all things cloud computing, called Latency with the tagline:

The only local conference dedicated to building secure, high performing cloud native applications.

I’d been working with some relatively new tech in the past few months with AppSync which had only just come out of beta and was made “Generally Available” in April this year. A colleague and I decided we wanted to play around with the tech and help make the conference successful for not just the attendees, but also the speakers. To that end, the idea of Talkback was born.

In short Talkback is a completely serverless application built cloud-native which was used during the event to enable attendees to provide feedback to the speakers via a 1-5 star rating system and allow attendees to give comments and a rating on a talk.

Some people laugh when the term serverless is used and say - there’s no such thing! Servers must exist to serve the content. Of course, there are servers operating in the background - including the one hosting this static page, however the term is a little misleading. In short serverless means that you as a developer or owner of a product do not need to maintain any servers. In addition, most serverless infrastructure is pay for what you use. This means that if you don’t have any users or your traffic for the billing month was so small that you didn’t exceed the free usage limits, then it will cost you absolutely nothing. It’s a great pricing model for small businesses or startups that only want to pay for the stuff they actually use. Being a cheap-arse, this model suits me perfectly. Anyway, I digress.

The tech was built on Amazon Web Services, and it involved a fair few moving pieces. A quick run down of the stack and technologies:

Semantic UI and CreateReactApp bootstrapper (in TypeScript)

S3 to store the React app

GraphQL as the API

DynamoDB as the backing database

Cognito using OAuth or email/password as the authentication mechanism

CodeCommit (AWS’s github)

CodeBuild for testing and compilation

Code pipeline for executing test runners and the build

Cloudfront for the CDN and SSL termination

Route53 for the domain registration and management

Lambda function for some back-end processing of average score calculations

Using the create-react-app to get up and running quickly, we started building out the application using components from Semantic UI. We had some business rules which we set up with our behaviour driven development tests to help us make decisions as early as possible about how the software should behave so we didn’t waste time building out functionality we didn’t need. With our BDD tests done, once we had out react application component building we just needed to make it behave as intended. A core part of the functionality of the application was building out authentication, as we didn’t want to allow users to be able to vote twice for the same talk, and in order to prevent that, we had to have some concept of users. AWS Cognito provides a very nice tie-in to AppSync and through the use of AWS Amplify we were able to easily insert a connection to cognito.

We made use of the AWS developer tools for building out our continuous integration, and continuous deployment, making use of Pipeline Pete’s inception pipeline to get up and running quickly. Codecommit served as the git repo, though if you’re working with more than 2 or 3 developers you may have a frustrating experience, as regardless which files you touch, if two PR’s are opened, and one gets merged, there WILL ALWAYS BE CONFLICTS! WHY! I don’t know. I’ve submitted numerous feedback forms through the online interface, but this doesn’t seem to ever get resolved. Also, AWS, if you’re reading: being able to ‘rebase and merge’ would be a nice addition.

Using AppSync’s starter templates we were able to get the appsync instance going and it connects really nicely to DynamoDB which we used as our serverless datastore. Using a combination of adjacency lists and smart global secondary indexes we were able to get efficient queries out to the client’s requesting them minimising the impact on the database. One of the downsides of DynamoDB and really, any NoSQL database is that you really need to have your access patterns and projected queries well thought out before your application is built which can be a challenge. We did go through one re-write of our database in order to get to a point we were happy with. Once we knew how we wanted to access the data, it was relatively simple after that. Our BDD tests helped us immensely in this scenario. Based on how we expected our various examples and scenarios to play out, we were able to generate two or three strong access patterns for our data, and as a result we got highly efficient queries, which minimised our RCU (Read Capacity Unit) costs.

If you’ve worked with GraphQL before, but not seen Apollo, I would urge you to check it out. It’s a great library which is built to work with the popular javascript frameworks and native Android and iOS clients. Building simple, connected components is very straightfoward and additionally, testing them is very simple.

For example on the main page in Talkback, there’s a query which runs:

<Query query={LIST_TALKS} fetchPolicy={'network-only'}>

{({ loading, error, data }) => {

if (loading) {

return (

<Loader active={true} inline="centered" content="Getting the latest talks for you..." />

);

}

if (error) return <ErrorMessageComponent />;

return (

<Container>

<div className="talk-card-holder">

{filteredTalks.length > 0 ? (

filteredTalks.map(talk => (

<div key={talk.id} className="talk-card">

<TalkDetails talk={talk} />

</div>

))

)}

</div>

</Container

)

}

</Query>And our GraphQL just asks for the data it needs:

const LIST_TALKS = graphqlTag`

query listTalks {

listTalks {

items {

avatar

date

description

id

speaker

date

myVote {

id

score

comment

}

title

}

}

}

`;The above snippet is a quick example of one of the components requesting data from the AppSync API. The best part was that it’s also really simple to test:

In the example below we setup two talks to be returned from the API, and asserted that two divs with the class turned up. This is just one of the many tests but gives you a taste of how straightforward it is to test.

import { MockedProvider, MockedResponse } from "react-apollo/test-utils";

import { MemoryRouter } from "react-router";

import { ITalk } from "../../interfaces/talk";

import { LIST_TALKS } from "../../queries/talks";

import { FilterEnum } from "../Landing/Landing";

import UpcomingTalks from "./UpcomingTalks";

configure({ adapter: new Adapter() });

// Divide by 1000 because dynamo stores unix epoch timestamp

// in seconds, and JS uses miliseconds.

const dateToBeTested: number = Date.now() / 1000;

const talks: ITalk[] = [

{

avatar: [],

date: dateToBeTested,

description: "Introduction to Typescript",

id: "5b6b10cd-205e-404d-afc4-f08de7bce5d5",

myVote: null,

speaker: "Mr TS",

title: "Typescript",

},

{

avatar: [],

date: dateToBeTested,

description: "The Java Experience",

id: "47390dd5-99a8-4784-8172-8dab8b800953",

myVote: null,

speaker: "Mrs Java",

title: "Why we should give up on Java",

},

];

const mocks: MockedResponse[] = [

{

request: { query: LIST_TALKS },

result: {

data: {

listTalks: { items: talks },

},

},

},

];

describe("UpcomingTalks", () => {

it("should show details for each talk", async () => {

const upcomingTalks: ReactWrapper = mount(

<MockedProvider mocks={mocks} addTypename={false}>

<MemoryRouter>

<UpcomingTalks filterType={FilterEnum.ALL} />

</MemoryRouter>

</MockedProvider>

);

await delay(1);

upcomingTalks.update();

expect(upcomingTalks.find(".talk-card").length).toEqual(2);

});

});With great testing capability in our application we had the confidence to continue to make ongoing and rapid changes to our application without breaking functionality and we had a high level of confidence in our application as we continued to build out features. This helped us continually push changes through our pipeline straight into production. Even on the day of the conference, we pushed a live bug fix for a cookie related issue on the day with confidence that our users were able to continue to use the app without risking being offline during the event!

Bringing new meaning to the phrase “live updates” #talkback #Latency2018 pic.twitter.com/u7PcKtFdMg

— JK (@jgunnink) November 15, 2018

I don’t have the figures from the day for how much it would have cost, if anything, but we ended up with over 300 votes on the various talks, with plenty of great comments to help speakers improve in the future.

Being serverless, the application scaled out based on demand and when we the day was over, there was no changes to make or any servers to shut down, we only paid for the usage we went through.

DynamoDB gives you 25 GB of Storage, 25 Units of Read/Write Capacity, Can handle 200M requests per month.

Cognito provides the first 50,000 users for free

250,000 AppSync query or mutation calls/month for free

5gb of free storage with S3

1 million free lambda invocations

CodeCommit free for first 5 users

CodeBuild and Code Pipeline provided more than enough free access

We my have some CloudFront costs associated with delivering the site over the AWS CDN.

Other costs such as domain registration

Hard to believe that a web app used by ~100 people simultaneously, pushing live data to and from our serverless backend cost next to nothing. There’s nothing for us to maintain, nothing for us to patch, we only need to focus on building more value into the application. Pretty awesome.