Rails on Kubernetes

Run Rails on Kubernetes on Google Cloud Platform. Reduce costs with preemptible VMs and set up a load balancer.

For some time, I’ve run my fun side-project site, Secret Santa on Heroku. In fact, it’s been over three years that I’ve had the site there. Heroku is great for developers wanting to test out their ideas as they have a wonderful free tier of web servers, which has served me well over the past few years. Having spent the last 18 months with my feet firmly planted in the DevOps side of things, I wanted to experiment and test out some things I’ve learned along the way and get my ruby on rails application into the worlds fastest growing container orchestration engine, Kubernetes. In this post, I will discuss containerising a ruby on rails app, with two service dependencies, Redis and the database, Postgres running on the Google Cloud platform in the Google Kubernetes Engine.

Before we begin, it’s worth mentioning that I have taken the approach of making as much of my infrastructure as stateless as possible. I set myself some conditions, a challenge if you will, that:

My application has to survive if all the compute is taken down or disaster strikes

It has to be able to scale out horizontally if the service suddenly gets busy

I have to keep costs down to a minimum, and,

The infrastructure should be “as-code” so that if disaster strikes, we can get back up and running FAST.

Let’s get stuck in!

Preparing the application

To start with I had to containerise my app. What is a container? Read this first. For rails, this involved a few steps.

Firstly you have to decide on which operating system layer you want to start with. For many, Alpine Linux is a great choice because it is one of the smallest sized linux OS’s you can get and doesn’t include any cruft you don’t need, as well as being hardened out of the box. Making the image a great starting point.

Next, we’ll update the repository source lists so that we can then install software required for the application using the

apkpackage manager that comes with Alpine.The next layer in the container building will be to add the required software. Being a rails app, utlising postgres as our database layer, we’ll need

nodejsandpostgresql-devin order for the application to run the javascript as well as being able to install the postgres gem in a subsequent step.Optionally, I chose to run this command so that when we install the gems required for the application that we don’t bother with installing the documentation that comes with the gems:

RUN echo "gem: --no-rdoc --no-ri --env-shebang" >> "$HOME/.gemrc"Then we create a working directory and set one of the environment variables,

RAILS_ENVtoproduction.The next stage is to get the gemfiles copied over and install the required gems for production.

Then, we’ll copy over the application code to the container.

With the code in the container we can now start our optimisations for the container so it starts as quickly as possible. We do this by running

rake assets:precompilebefore the container is built so we don’t need to do it every time the container starts. Then we’ll remove any directories no longer required by the container to run.Finally, we can execute the start of our application.

Take a look here for the the current state of the dockerfile.

With the Dockerfile in hand, there are two remaining pieces required for this particular application to be able to start. The second piece of the puzzle I decided to tackle was redis. Being an in-memory key/value store which I only use to temporarily store emails, names and list details, I didn’t need to maintain any state here, because as soon as the lock and assign is set, two things happen. The first is that emails are sent out and the second is that the list details are stored in the database. So redis was an easy candidate. All I need to do is make the service available, and I can do that with a pre-built container provided by redis over at dockerhub.

With redis sorted, we only have the remaining database tier to take care of in our web-application. Utilising cloud infrastructure, you have a few options. You could use the managed postgres service using Cloud SQL, but it’s pricey for a small application. I don’t necessarily need the compute and memory power allocated to it, which at a minium starts at 1 CPU and 3.75 GB RAM.

I’ll just pause here a minute. I’ve just looked this up to confirm, and it seems that CloudSQL with Postgres is now available at cheaper tiers. At the time I started looking at this (about 3 weeks ago, before Next ‘19), it was unavailable (or I was blind) at the cheaper than the ~$25/month price. So I guess you have two options. You can either continue with CloudSQL or my method as described below. I’ll say that it’s usually a better option to go for a managed service (like CloudSQL) as you get some stuff for free, like backups, high availability, etc.

For this implementation, I decided to go with a “marketplace” solution for postgres. This involved a “click-to-deploy” instance of Postgres from the VM marketplace. Once installed, I updated the machine type to be an f1-micro, which is the smallest unit of compute power available on the Google Cloud platform. I will run you about $5/month, and is eligible for the free tier. Given this instance is the database layer, I opted to make it a regular instance (opposed to pre-empitble) and have setup scheduled snapshots as my “emergency” go to if something were to happen. Being a small app with not much traffic most times of the year except around October, November and December, this is fine. If you had a bigger app with tens of thousands of users or your data was absolutely critical, I wouldn’t recommend putting your database on a VM and instead use a managed relational database service such as Cloud SQL.

Deploying the application

With the three pieces of my application in hand, it’s time to start plumbing everything together. Firstly, I decided on the region. Sticking to one of the rules about cost, the US is the cheapest regions to host compute on GCP, so with that decision made, I created a subnet VPC for the application to live in. I then started up my postgres VM inside that VPC, with a firewall rule that specified that only other machines inside the VPC are able to talk to the machine on a specified port, reducing exposure from the public internet. Second, I created my kubernetes cluster with the following command:

gcloud beta container --project "secretsanta-web" clusters create "secretsanta-cluster" \

--region "us-central1" \

--no-enable-basic-auth \

--cluster-version "1.12.7-gke.7" \

--machine-type "g1-small" \

--image-type "COS" \

--disk-type "pd-ssd" \

--disk-size "10" \

--metadata disable-legacy-endpoints=true \

--scopes "https://www.googleapis.com/auth/cloud-platform" \

--preemptible \

--num-nodes "1" \

--enable-cloud-logging \

--enable-cloud-monitoring \

--enable-ip-alias \

--network "projects/secretsanta-web/global/networks/secretsanta-web-us" \

--subnetwork "projects/secretsanta-web/regions/us-central1/subnetworks/secretsanta-web-us" \

--default-max-pods-per-node "110" \

--enable-autoscaling \

--min-nodes "1" \

--max-nodes "3" \

--addons HorizontalPodAutoscaling,HttpLoadBalancing \

--enable-autoupgrade \

--enable-autorepair

You may notice that I have enabled a feature which is currently in beta, and in my opinion, one of the best things about Google Cloud, pre-emptible instances. For the uninitiated, pre-emptible instances allow you to save up to 80% on the cost of computer power if in exchange you are happy for your VM’s to be terminated at any time with 30 (60?) seconds notice, and that they can only live for a maxiumum of 24 hours.

Part of the design of the application is that it is designed to be stateless with containers starting up and terminating at any time this suits me perfectly. And I get to save 80% on my compute costs! Awesome!

With my Kubernetes cluster up, it’s time to apply some services, pods and ingresses to the cluster to get the application to start working. Here’s the list of things we need:

Since redis can operate without anything depending on it, it makes sense to start this service first.

Next, we’ll need to provide a way for our database to be able to be reached from the application, so let’s write a file which describes the endpoint and the service

Now we can start our web tier since we have redis running and a way to communicate with the database.

Finally, we need a way to expose the web tier from inside the cluster to the outside world. We do that with an ingress service.

Realistically, you can actually run all all these files at the same time and kubernetes will start all of them and they will all come up eventually, with the pods just starting and restarting until they are healthy and happy. For example, if you started the web tier first, and redis wasn’t running yet, the web server would start, be unable to connect to redis, and then shutdown and reboot. It would keep doing that until it could connect to the services it needed to.

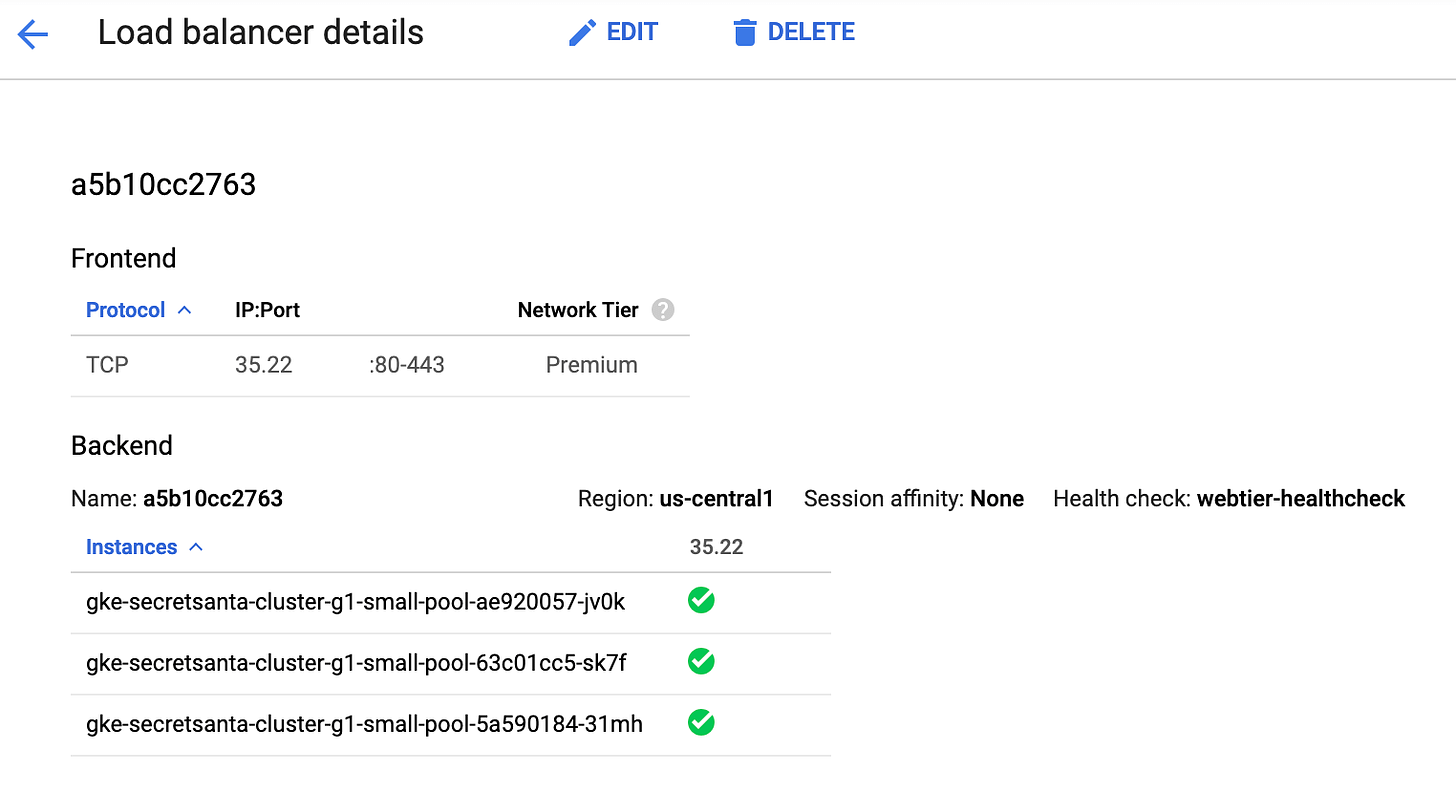

Looking in the GCP console, we can now see, under Network Services -> Load Balancing our load balancer with the three cluster nodes we have running!

Using the IP address on this page, we can now connect to the web application using either port 80 or 443, noting that you’d need to do additional work to get SSL termination working on your load balancer before any browser worth using would let you access the page.

Re-capping

We’ve:

setup a postgres database running on a standard VM with the smallest unit of compute power available, an f1-micro instance.

deployed a kubernetes instance in the US-Central1 region

defined the services, load balancers and ingresses we need for the cluster

deployed all of this infrastructure “as code”. If we (or someone else who has access) accidentally deletes the infrastructure, we can just run our scripts to get back up and running.

kept our costs low. You can see this link for an idea of the total cost.

Total cost: USD 38.69 per 1 month.

Too high? There’s definitely room to cut costs.

For example you could have your own nginx container as the ingress to your cluster, rather than use a load balancer, and would save you $18.26 right off the bat. Other savings you could make might be around switching out SSD disks in exchange for standard HDDs. You could also switch to using just one region and set a scaling policy down to 1 node which would save you on compute.

The post was already getting long enough but this gives you an idea. I think you might be able to get the costs to around $15 if you wanted it badly enough. :-)

Always keep in mind that saving costs somewhere mean you give up something somewhere else. For example, your time or worsening your user experience. You may not want to look into managing another container just for load balancing, plus you then can’t take advantage of Google’s premium network with entry at the nearest edge location. Switching out that SSD might mean you scale out horizontally too slowly causing 5xx errors for your users.

Next steps

We’ve covered a lot of ground getting our application up and running. But what’s next? Some things I need to look into include:

Monitoring. GKE and Stackdriver provide excellent monitoring tools for insights into how your application is performing as well as helping to identify errors and potential problems.

Migrating from Heroku to GCP. I have three years worth of user data in my database which is on the heroku platform, and I’ll need to migrate that over.

SSL. Any website in 2019 needs to have SSL. Modern browsers will warn you that the website is not secure. Given we deal with emails, passwords and names, as well as accept fees from paypal, we definitely need to organise an SSL certificate to be installed.

Stress testing. You can do this with an online tool I heard about called Loader, by Sendgrid. Worth a look to see how your application performs under stress.

Resources

I used many resources in putting this post together. One of the best and probably most underrated features on Google Cloud is the little link that’s found at the bottom of most of the configuration pages for the resources your configuring. Usually it says “Equivalent REST” but you can also find the YAML versions when doing work with the Kubernetes engine. These little links are absolute nuggets of gold as it helps you get a command line equivalent of the infrastructure you’re creating so it can be executed on the command line, which is what I’ve used as the files in this post. Look for this at the bottom of most pages:

Other resources included: